Designing a Hiring Platform to Evaluate Technical Talent at Scale

Project Summary

At Onramp, I designed a centralized hiring platform that enabled the selection team to evaluate technical candidates at scale by consolidating interview feedback, candidate data, and evaluation signals into a single workflow.

Company: Onramp

Role: Lead UX Designer

Duration: 1 Month

Platform: Internal Hiring Tools

Context

As Onramp expanded its hiring program, the selection team needed to evaluate a rapidly growing number of technical candidates from non-traditional backgrounds.

However, the existing hiring workflow relied heavily on spreadsheets and disconnected tools, which created operational friction across the evaluation process.

🔎 The Problem

The hiring process lacked a centralized system for evaluating candidates.

Without a unified workflow:

Reviewers struggled to synthesize multiple evaluation signals

Recruiters spent significant time coordinating candidate updates

Candidate comparisons required manual spreadsheet work

Important context about candidate performance was difficult to track

As candidate volume increased, the process became increasingly difficult to scale.

💻 My Role

I led the UX design of a centralized candidate evaluation system and collaborated closely with founders, the selection team, and engineering.

My responsibilities included:

Stakeholder interviews

Workflow mapping

Information architecture

UX and UI design

Designing batch evaluation tools

Designing automated candidate communication flows

⚙️Understanding the system workflow

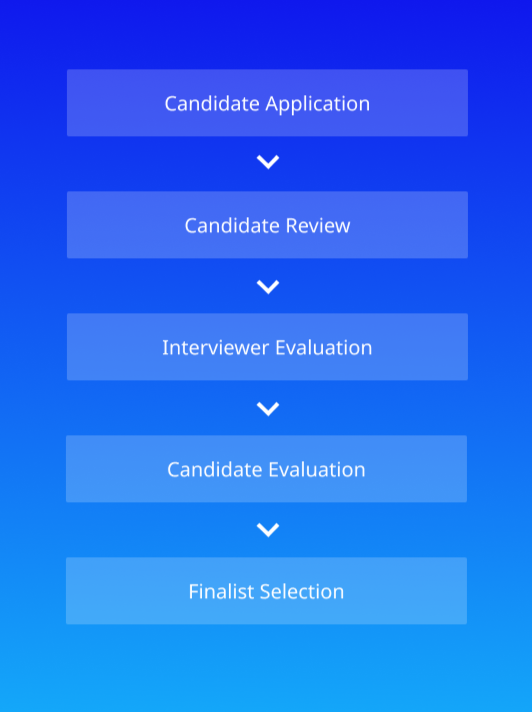

Before designing interfaces, I mapped the end-to-end hiring workflow to understand how candidates moved through the selection process.

This exercise revealed the core components of the system:

A candidate feedback interface for interviewers

A hiring dashboard for reviewing and comparing candidates

A candidate profile pagecontaining interview feedback history

A finalist spotlight interface for presenting top candidates to partner companies

🤝 Key Insights

Through stakeholder interviews and workflow analysis, the following key insights emerged:

Hiring decisions require context

Recruiters evaluate candidates using multiple signals including interview scores, written feedback, and candidate history.

Comparison is critical

Recruiters rarely evaluate candidates in isolation. They frequently compare multiple candidates to find top talent.

Communication was time consuming

Selection team members spent significant time sending manual candidate updates and coordinating through spreadsheets.

These insights informed the design of a centralized system that supported scalable candidate evaluation.

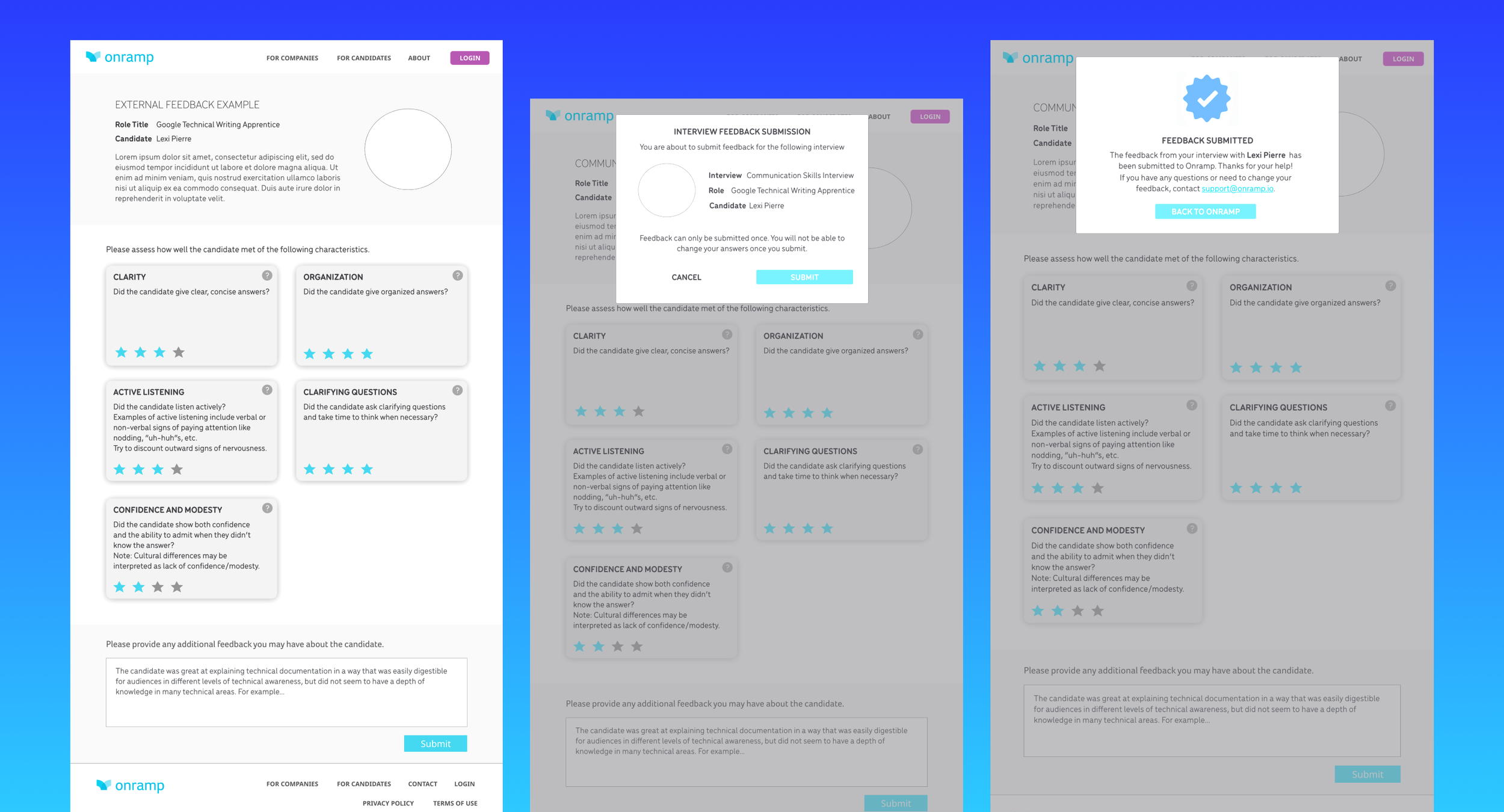

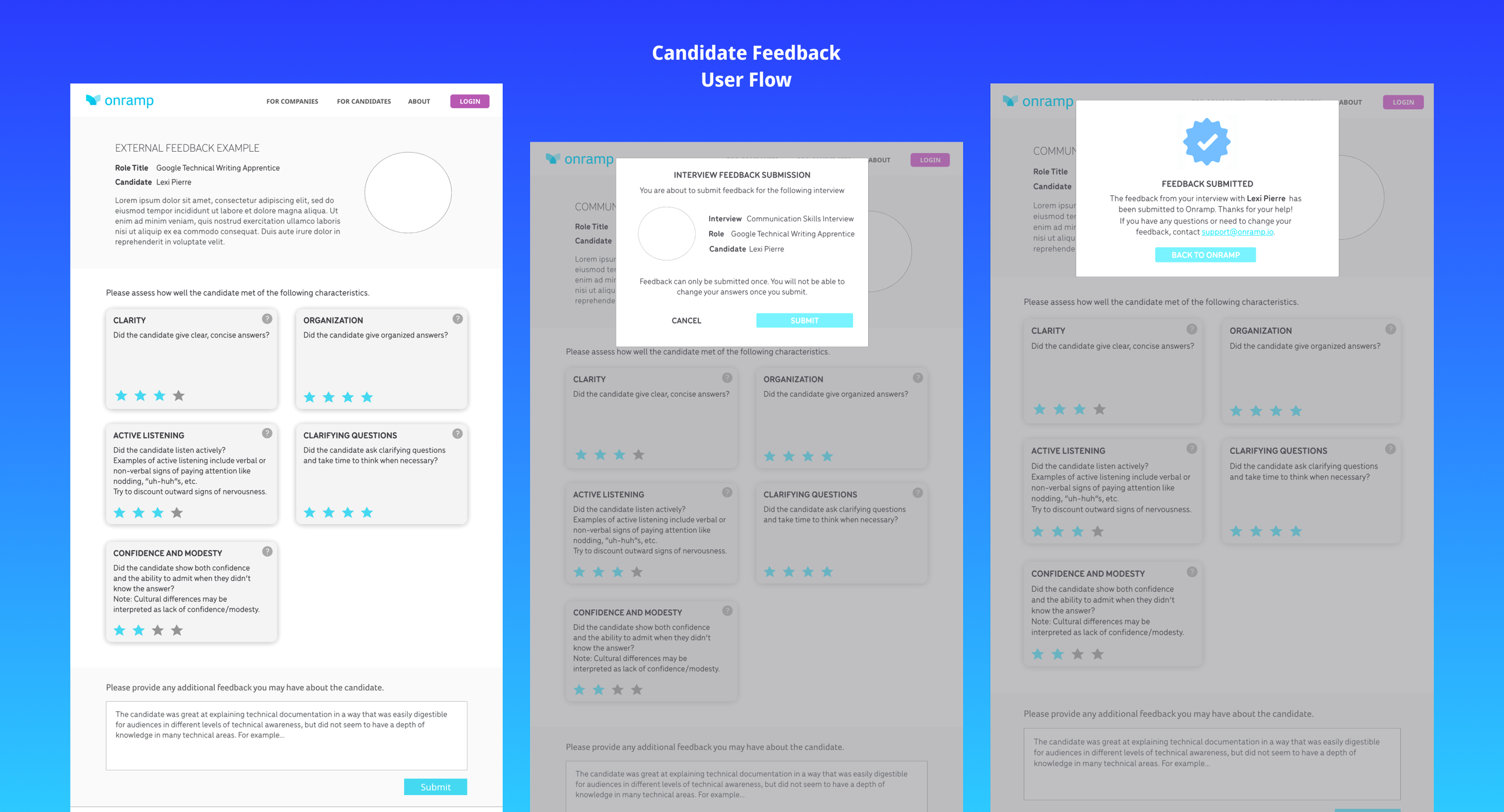

🎯Structuring interview feedback

One of the biggest challenges in the hiring process was inconsistent interview feedback.

To improve evaluation quality, I designed structured feedback fields that captured standardized signals across interviews.

Key design decisions:

Structured feedback fields for consistent scoring

Quick scoring inputs to reduce reviewer friction

A confirmation state after submission to prevent duplicate feedback

Standardized feedback made it easier to compare candidates and improved fairness and consistency in the evaluation process.

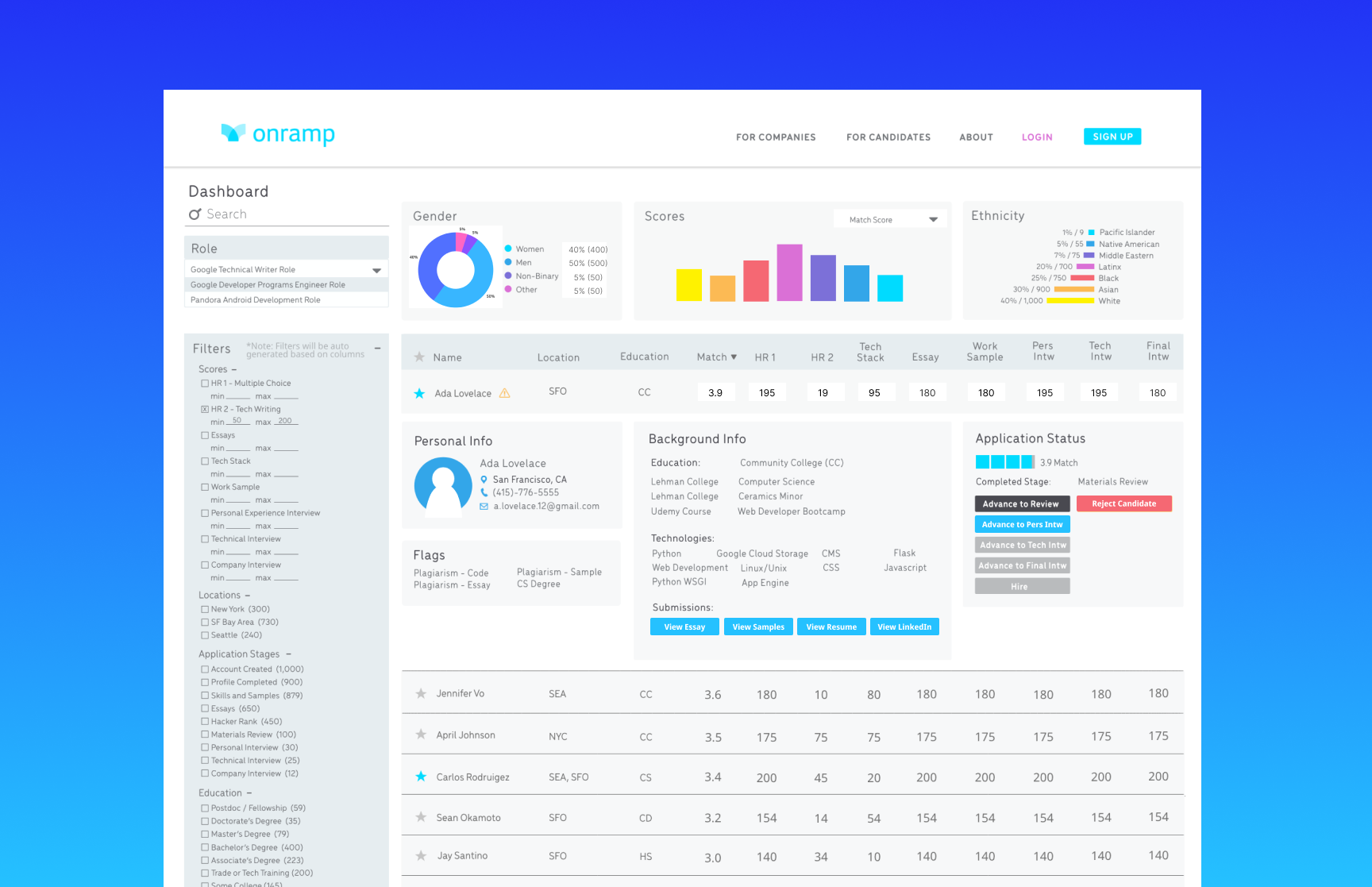

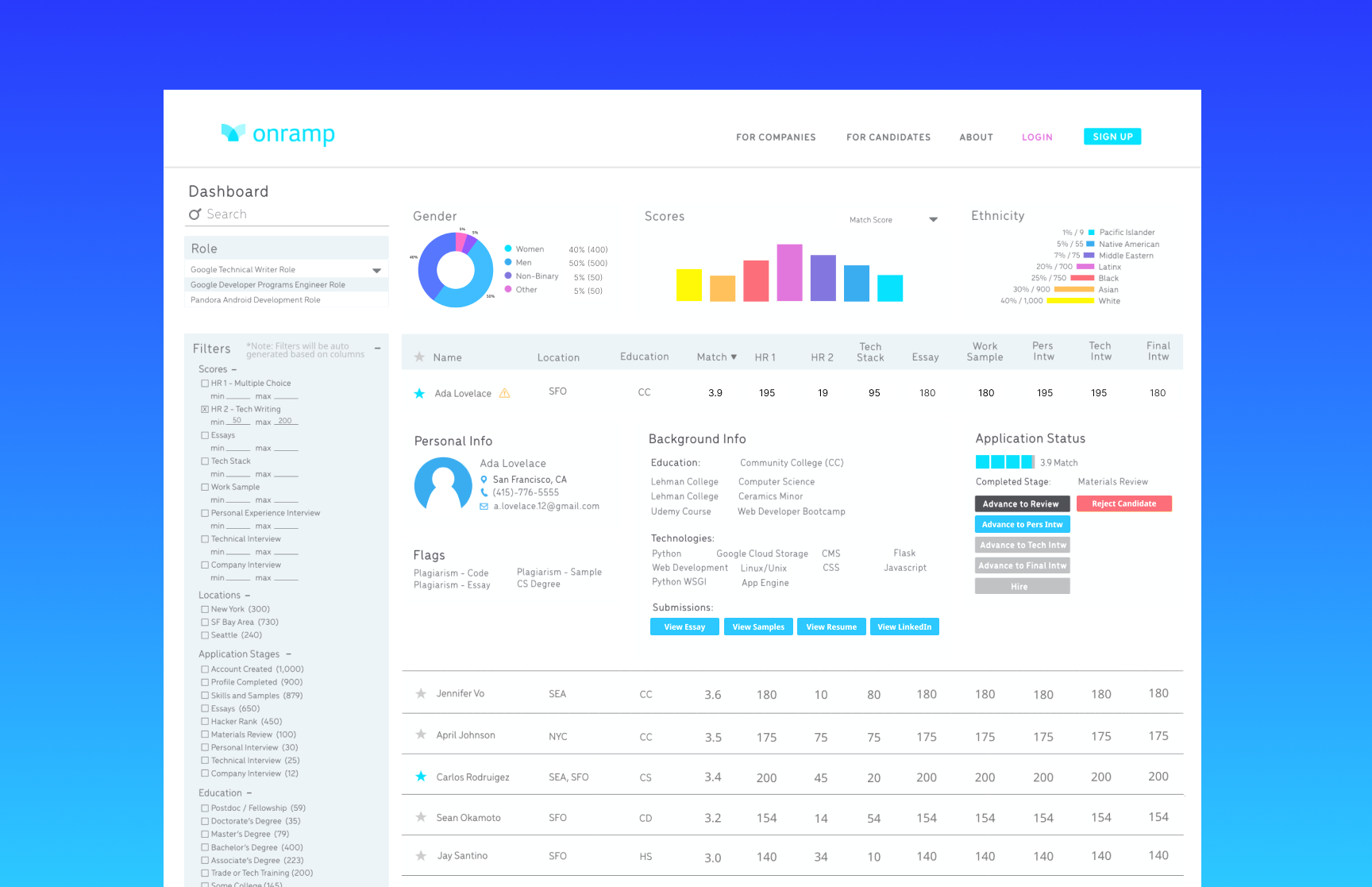

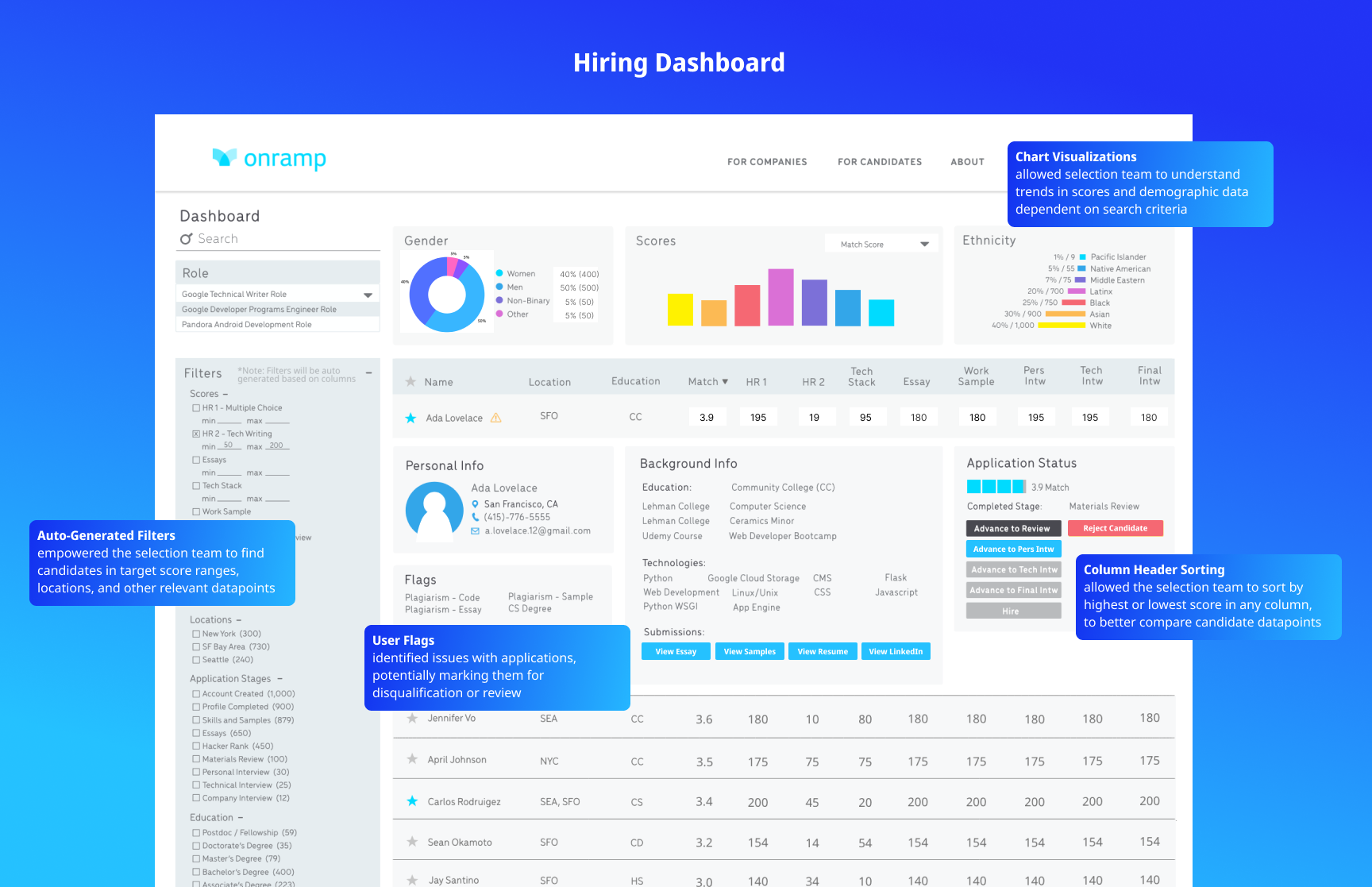

🎯Evaluating candidates at scale

The hiring dashboard provided the selection team with a centralized interface to review and compare candidates.

Key features included:

Filters for scores, locations, and candidate attributes

Sorting tools to identify top performers

Batch actions to move candidates forward, reject, or hire

Automated email communication for candidate updates

The dashboard reduced manual coordination and enabled recruiters to quickly identify high-performing candidates as application volume increased.

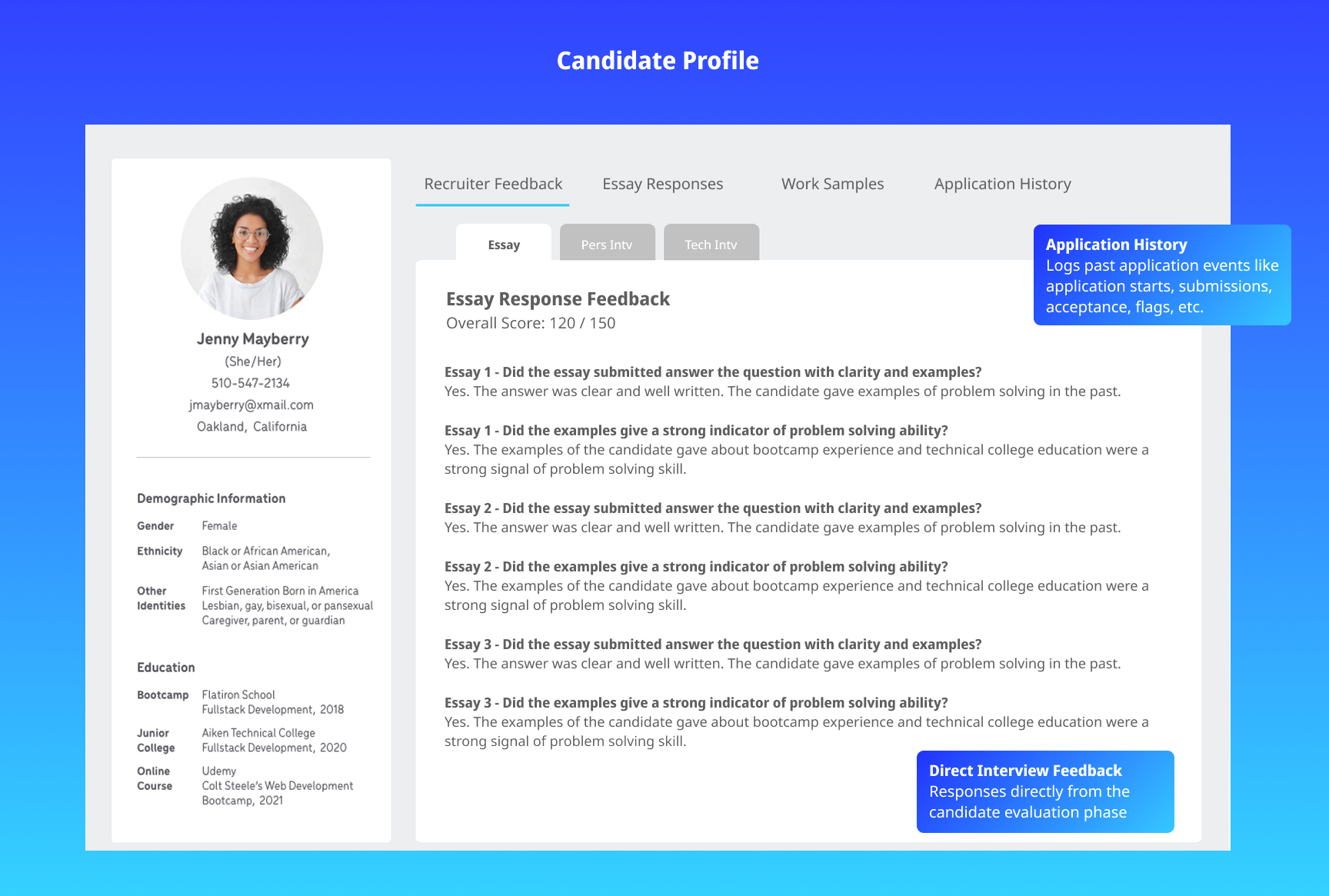

🎯Candidate Profiles

Each candidate profile provided a detailed view of interview history and evaluation signals.

Profiles included:

Interview feedback and scores

Candidate background and technical experience

Historical evaluation data

This allowed reviewers to quickly understand a candidate’s full evaluation context without navigating across multiple tools.

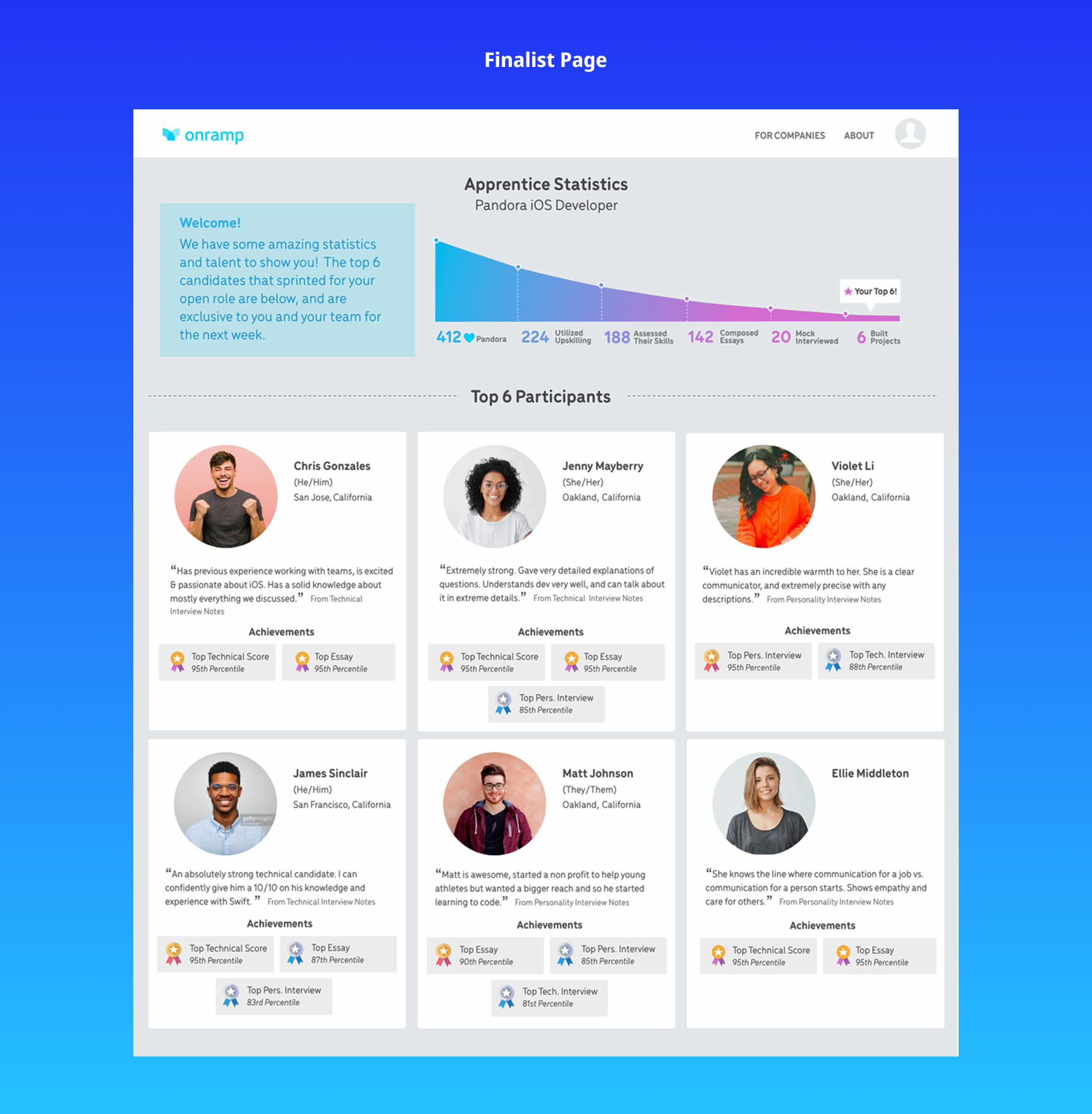

🎯Highlighting finalists for partner companies

After identifying top candidates, the system needed a way to present them clearly to partner companies.

The finalist interface highlighted:

Candidate progress in the selection process

Key performance signals

Positive interview feedback

This helped partner companies quickly understand each candidate’s strengths while reinforcing Onramp’s mission of surfacing overlooked technical talent.

✨ Project Outcomes

The new system improved the team’s ability to evaluate candidates efficiently as hiring volume increased and reduced operational friction across the hiring process. Results included:

✔ Streamlined candidate review workflows

✔ Centralized interview feedback and evaluation data

✔ Reduced manual coordination across the selection team

✔ Automated candidate communication

✔ Enabled scalable candidate evaluation

✨ What I would improve

Given more time, I would explore adding predictive signals or candidate scoring models to further support recruiter decision-making. I would also test ways to surface standout candidates earlier in the pipeline.